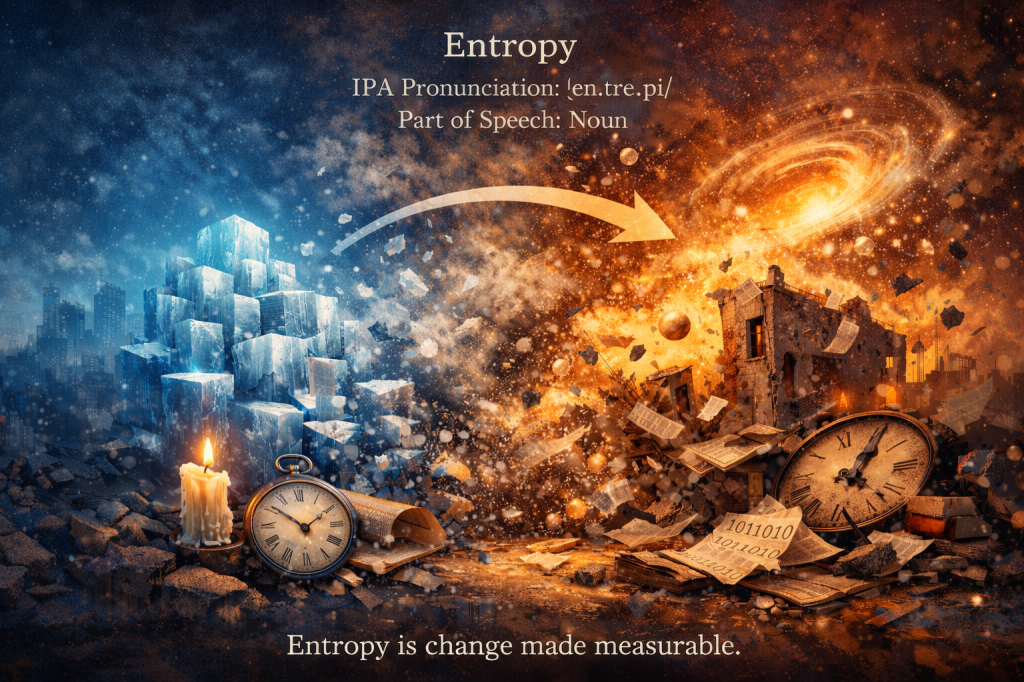

Entropy

IPA Pronunciation: /ˈɛn.trə.pi/

Part of Speech: Noun

Origin

Entropy belongs to the vocabularies of thermodynamics, physics, information theory, and philosophy. It refers to a measurable quantity representing disorder, randomness, or the number of microscopic configurations consistent with a system’s state.

Physicists describe entropy as a directional arrow hidden inside matter — a tendency for organized systems to disperse, equilibrate, and spread energy. It governs why heat flows, why structures decay, and why time appears to move forward.

Entropy is change made measurable.

Etymology

From Greek: en- — within

trope — transformation, turning

Coined in the 19th century to parallel the word energy, preserving the sense of internal transformation.

Core Definitions

Thermodynamic Quantity

A measure of energy dispersal or microscopic disorder.

“The entropy of the closed system increased.”

Direction of Natural Processes

Indicator of irreversibility in physical change.

“Melting ice raises entropy.”

Information Measure

In information theory, a measure of uncertainty or unpredictability.

“The message had high entropy.”

Explanation & Nuance

Entropy describes how many ways a system can be arranged internally without changing its outward appearance.

Low entropy systems are:

Ordered

Structured

Concentrated

Predictable

High entropy systems are:

Dispersed

Random

Diffuse

Statistically probable

A crystal has low entropy.

A gas filling a room has high entropy.

Entropy does not mean chaos alone — it means multiplicity of possible states.

Scientific Significance

In physics, entropy is central to the Second Law of Thermodynamics, which states that in an isolated system:

Entropy tends to increase.

This principle governs:

Heat flow

Chemical reactions

Phase transitions

Cosmic evolution

It explains why:

Hot objects cool

Stars burn out

Structures decay

Time seems directional

Without entropy, physical processes would be reversible and time would lack distinction between past and future.

Mathematical Perspective

Entropy can be expressed quantitatively.

Thermodynamic form relates entropy to heat and temperature.

Statistical mechanics defines it through the number of microscopic arrangements compatible with a system’s macrostate.

Information theory defines it as:

Average uncertainty per symbol.

Thus, entropy links:

Physics

Probability

Information

Time

Everyday Analogies

Ink dispersing in water

A shuffled deck of cards

A messy room

Perfume diffusing through air

All illustrate systems moving from fewer arrangements to more.

Examples in Context

Scientific:

“Entropy increases in irreversible processes.”

Everyday:

“Leaving things alone invites entropy.”

Computational:

“The algorithm reduces entropy in the data.”

Philosophical:

“Civilizations struggle against entropy.”

Metaphorical:

“His plans dissolved into entropy.”

Symbolic Dimensions

Drift — tendency toward dispersion

Fading — loss of structure

Probability — dominance of the most likely state

Time’s Arrow — direction embedded in matter

Dissolution — order relaxing into possibility

Entropy symbolizes inevitability without intention.

Synonyms & Near-Relations

Disorder — general condition

Randomness — unpredictability

Dissipation — spreading of energy

Equilibrium — final state

Decay — structural breakdown

(Only entropy unifies all these under a single measurable principle.)

Conceptual Relations

Irreversibility — one-way processes

Time — directional flow

Probability — statistical likelihood

Energy — transformation capacity

Complexity — structure versus distribution

Cultural & Intellectual Resonance

Physics

Defines the limits of engines, computation, and physical processes.

Philosophy

Used to discuss impermanence and the fate of systems.

Information Science

Measures uncertainty and data compression limits.

Literature & Art

Symbolizes decline, dissolution, or the passage of time.

Takeaway

Entropy names the quiet law governing change —

the tendency of all things to spread, soften, and rearrange.

It reminds us that order is local,

effort is temporary,

and equilibrium is patient.

Entropy is not destruction,

but redistribution.

It is the mathematics of becoming,

the statistic behind time,

the whisper that all structures,

however intricate,

lean gently toward possibility.

Entropy is time’s quiet signature written into matter.

Curious about what happened today in history? Want to learn a new word every day?

You’ll find it all—first and in one place—at The-English-Nook.com!

If you love languages, this is your space.

Enjoy bilingual short stories, fun readings, useful vocabulary, and so much more in both English and Spanish.

Come explore!

Leave a comment